Compact deep neural network models of the visual cortex

TL;DR

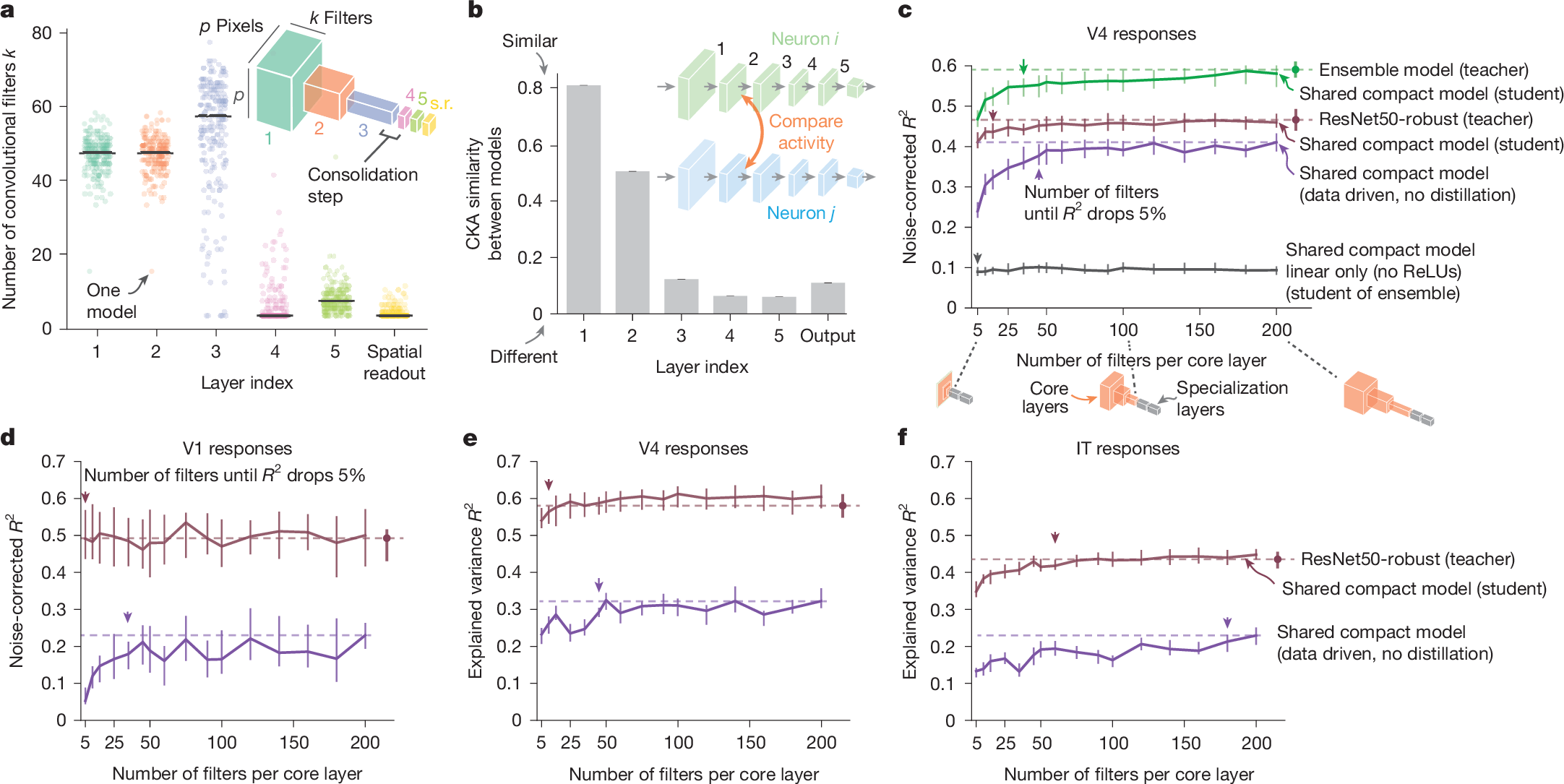

Researchers developed compact deep neural network models of the primate visual cortex, compressing a 60-million-parameter model to 5,000 times fewer parameters while maintaining accuracy. This revealed a computational motif where models share early filters but specialize later, challenging the need for large DNNs and offering insights into neural mechanisms.

Key Takeaways

- •Compact DNN models with 5,000 times fewer parameters can predict neural responses in primate visual cortex as accurately as large models.

- •These models share similar filters in early processing but specialize feature selectivity through distinct consolidation steps.

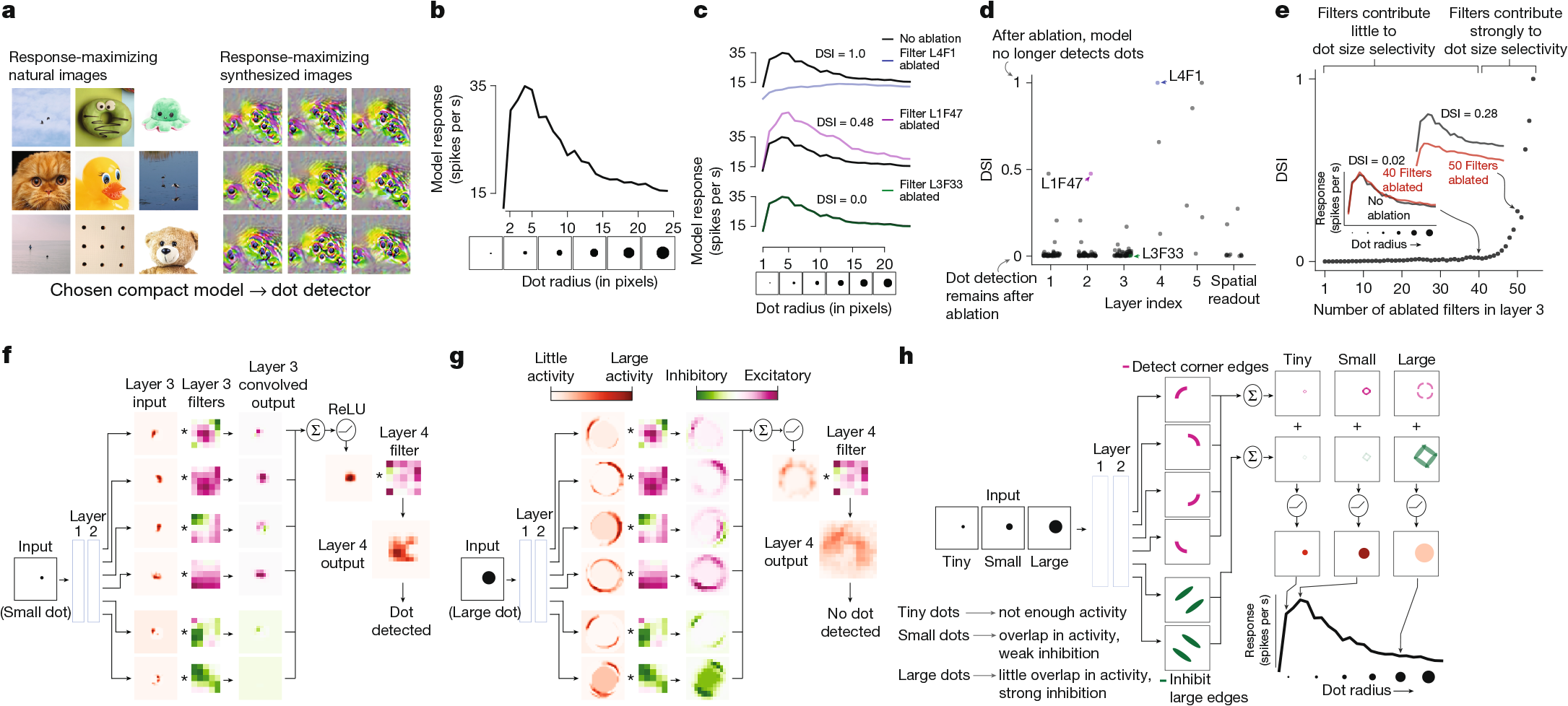

- •The approach enables investigation of inner workings, such as uncovering computational mechanisms for dot-selective neurons in V4.

- •Model compression was effective across multiple visual areas (V1, V4, IT), suggesting a general computational principle.

- •The work challenges the notion that large DNNs are necessary for predicting individual neurons, balancing prediction and parsimony.

Tags

Abstract

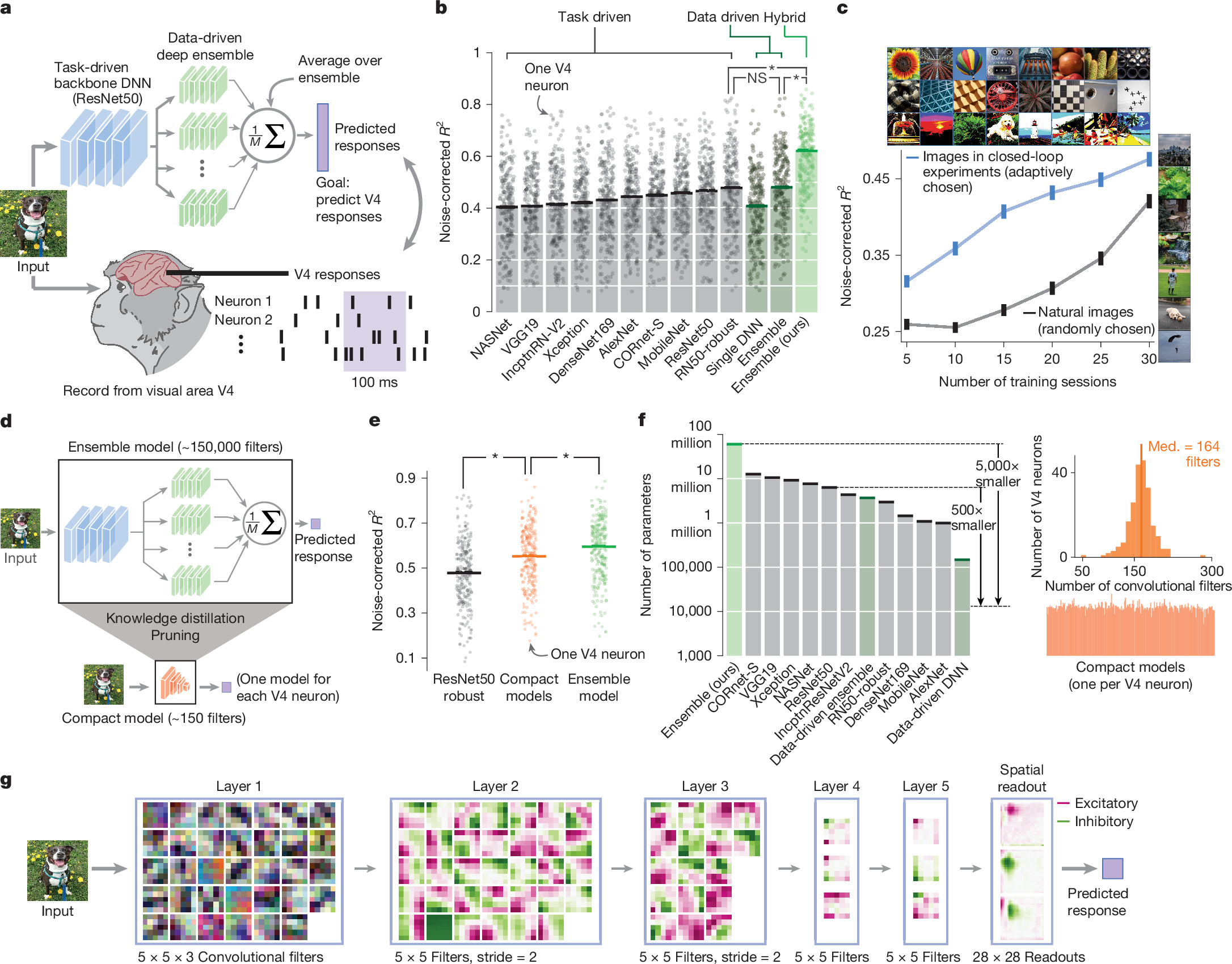

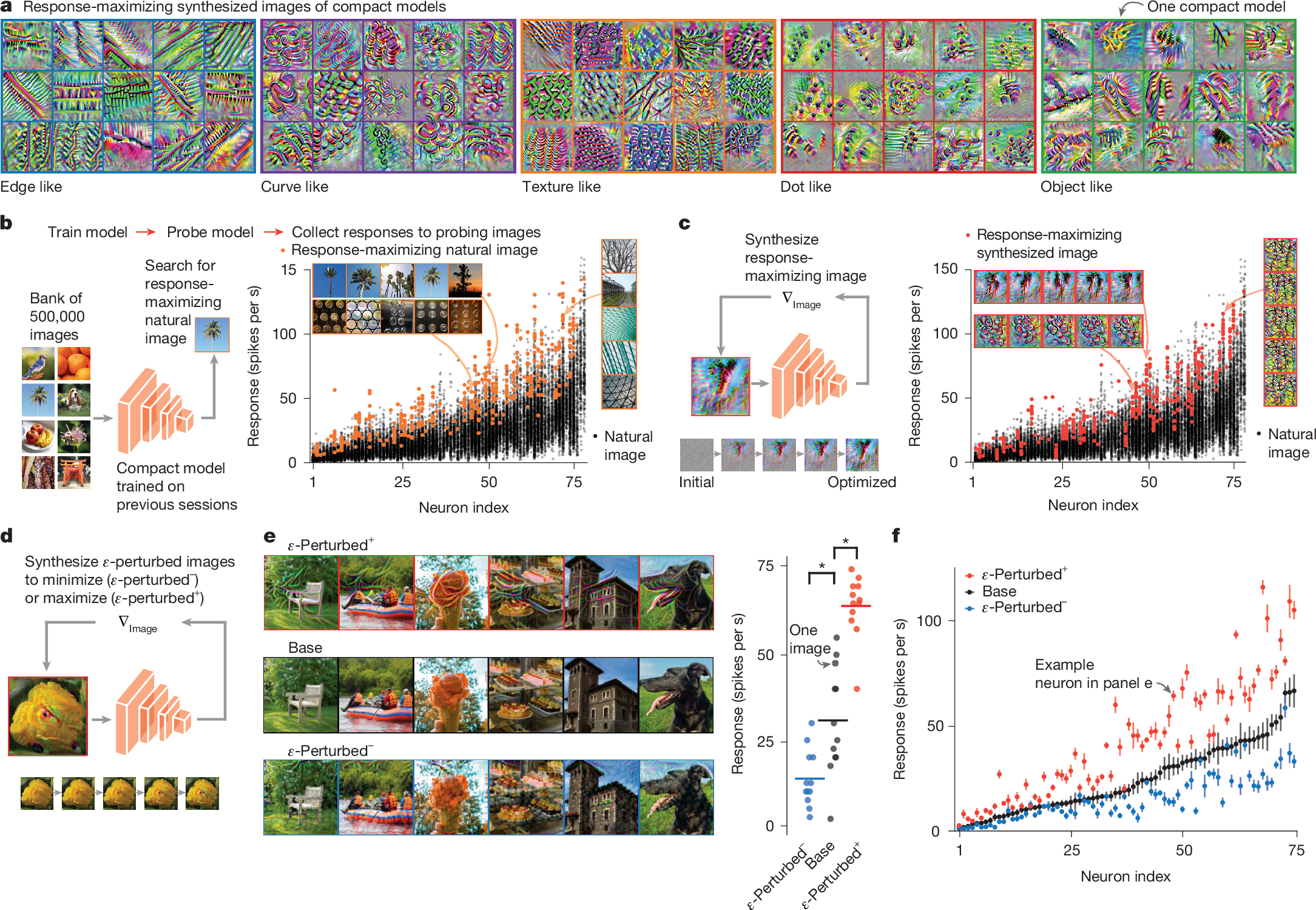

A powerful approach to understand the computations carried out by the visual cortex is to build models that predict neural responses to any arbitrary image. Deep neural networks (DNNs) have emerged as the leading predictive models1,2, yet their underlying computations remain buried beneath millions of parameters. Here we challenge the need for models at this scale by seeking predictive and parsimonious DNN models of the primate visual cortex. We first built a highly predictive DNN model of neural responses in macaque visual area V4 by alternating data collection and model training in adaptive closed-loop experiments. We then compressed this large, black-box DNN model, which comprised 60 million parameters, to identify compact models with 5,000 times fewer parameters yet comparable accuracy. This dramatic compression enabled us to investigate the inner workings of the compact models. We discovered a salient computational motif: compact models share similar filters in early processing, but individual models then specialize their feature selectivity by ‘consolidating’ this shared high-dimensional representation in distinct ways. We examined this consolidation step in a dot-detecting model neuron, revealing a computational mechanism that leads to a testable circuit hypothesis for dot-selective V4 neurons. Beyond V4, we found strong model compression for macaque visual areas V1 and IT (inferior temporal cortex), revealing a general computational principle of the visual cortex. Overall, our work challenges the notion that large DNNs are necessary to predict individual neurons and establishes a modelling framework that balances prediction and parsimony.

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Disparate nonlinear neural dynamics measured with different techniques in macaque and human V1

Recurrent issues with deep neural network models of visual recognition

A central and unified role of corticocortical feedback in parsing visual scenes

Data availability

Raw data including spike timing for all recording sessions are available97. Processed responses for model training and evaluation as well as stimulus images are available on GitHub (https://github.com/cowleygroup/V4_compact_models). Source data are provided with this paper.

Code availability

All spike sorting was performed using custom Matlab software available on GitHub (https://github.com/smithlabvision/spikesort). Model weights and code are available on GitHub (https://github.com/cowleygroup/V4_compact_models).

References

Yamins, D. L. & DiCarlo, J. J. Using goal-driven deep learning models to understand sensory cortex. Nat. Neurosci. 19, 356–365 (2016).

Kriegeskorte, N. Deep neural networks: a new framework for modeling biological vision and brain information processing. Annu. Rev. Vis. Sci. 1, 417–446 (2015).

Heeger, D. J. Half-squaring in responses of cat striate cells. Vis. Neurosci. 9, 427–443 (1992).

Rust, N. C., Schwartz, O., Movshon, J. A. & Simoncelli, E. P. Spatiotemporal elements of macaque V1 receptive fields. Neuron 46, 945–956 (2005).

Pillow, J. W. et al. Spatio-temporal correlations and visual signalling in a complete neuronal population. Nature 454, 995